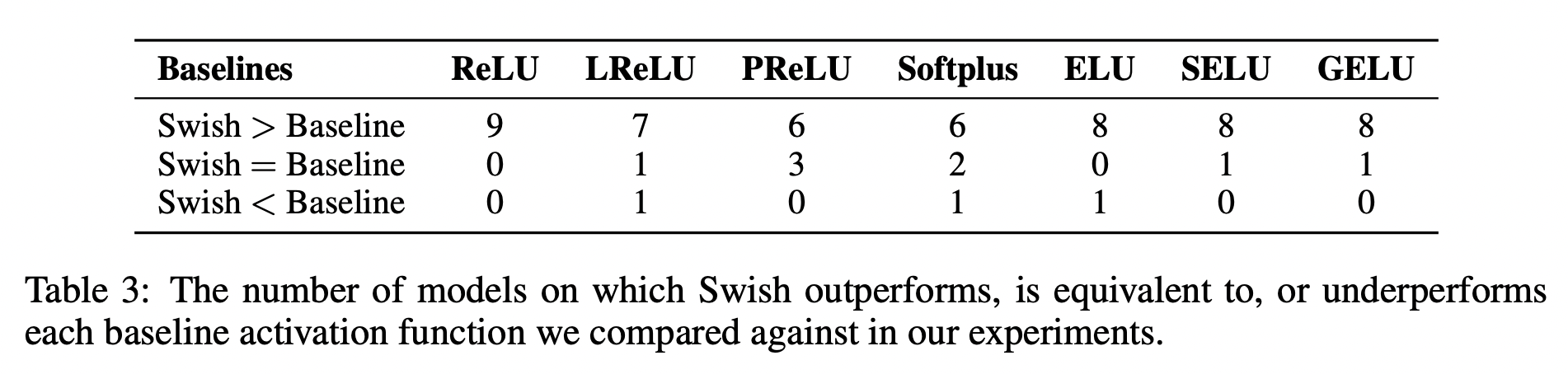

So far I can't feel the power of the swish activation function. It seems that swish is not powerful as I expect, and swish need about 20% extra time to train.Īlso compare with their training history, relu seem got better training curve. If swish really better than relu? swish confusion matrix fit( x_train, y_train, batch_size = 100, epochs = 100, validation_data =( x_test, y_test)

compile( optimizer = adam, loss = 'binary_crossentropy'Ĭlassifier. First, the sigmoid function was chosen for its easy derivative, range between 0 and 1, and smooth probabilistic. Activation functions have a long history. At the same time, it means that you can use any activation function name as a string. ReLU has been defaulted as the best activation function in the deep learning community for a long time, but there’s a new activation function Swish that’s here to take the throne. However, it's not readily available within the Keras deep learning framework, which only covers the standard activation functions like ReLU and Sigmoid. So, activation'elu' will be converted into tf. (). Flatten-T Swish is a new (2018) activation function that attempts to find the best of both worlds between traditional ReLU and traditional Sigmoid. add( Dense( units = 1, activation = 'sigmoid', kernel_initializer = 'uniform'))Ĭlassifier. When keras receives tf. (10, activation'elu') it will go into the activation function module and will literally call activation function by its name if it is present there. add( Dense( units = 5, activation = swish, kernel_initializer = 'uniform'))Ĭlassifier. add( Dense( units = 10, activation = swish, kernel_initializer = 'uniform'))Ĭlassifier. add( Dense( units = 25, activation = swish, kernel_initializer = 'uniform'))Ĭlassifier. add( Dense( units = 50, activation = swish, kernel_initializer = 'uniform'))Ĭlassifier. add( Dense( units = 10, activation = swish, kernel_initializer = 'uniform', input_dim = 10))Ĭlassifier. utils import class_weight from keras import backend as K def swish( x): layers import Dense, Activation from keras import optimizers from sklearn.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed